Phase 1: Fragmented Systems and Explicit Control

The geological data workflow underpinning modern mining was built on spreadsheets, flat files, and manual interpretation. For decades, exploration and resource geologists captured drillhole data in Excel workbooks and Access databases, transferred assay results via CSV, and constructed 3D geological models by manually wireframing drillhole intercepts.

These methods produced the JORC and NI 43-101 compliant resource estimates that financed billions of dollars in mining investment worldwide.

They were built for compliance, not engineering.

The structural risks of this phase are about the file formats and data management infrastructure, not the practitioners or the methodology. Spreadsheets lack enforced validation rules and immutable audit trails. A cell can be overwritten without any record of the previous value.

CSV file transfers introduce a class of errors structural to the format itself:

- Leading zeros stripped from drillhole IDs (DH001 becomes DH1, breaking relational joins)

- Date format inconsistencies between US (MM/DD/YYYY) and Australian (DD/MM/YYYY) conventions

- Decimal precision truncation and character encoding mismatches that corrupt lithological descriptions

The time cost compounds across the organisation.

When a CSV import silently truncates assay values from four decimal places to two, the downstream impact on a kriged block model grade estimate can be material.

Julian Poniewierski documented a block model error that overvalued reserves by 20% for six years before detection. An AMEC audit found only 4 of 26 resource projects had adequate QAQC protocols.

The regulatory context amplifies the risk. Under JORC Code Table 1, Section 1, the Competent Person must document the nature and quality of sampling, including sample preparation, security, sub-sampling techniques, and QAQC protocols. Under NI 43-101 Part 3, the Qualified Person must describe data verification procedures.

When the underlying data management system is a collection of spreadsheets with no enforced validation or audit trail, the Competent Person is signing off on an inherently fragile data foundation.

Phase 2: Centralised Relational Databases: The Secure Hub

The shift from fragmented file-based workflows to purpose-built geological database management systems is the single most important risk-mitigation step in the geological data architecture. These platforms replace spreadsheets and CSV transfers with structured relational databases that enforce data integrity at the point of capture.

These platforms enforce validation at the point of data entry. Collar coordinates must fall within the defined tenement boundary. Downhole surveys must have valid dip and azimuth ranges.

A dip value of 95 degrees is flagged immediately, not discovered six months later when the resource geologist notices a drillhole trace projecting above the topographic surface.

Assay values must pass QAQC checks (certified reference materials, field duplicates, and blanks) before being committed to the production database. QAQC protocols add only 1–2% to exploration budgets but catch up to 70% of field-generated data errors.

The relational database schema follows a standard architecture: collar tables (drillhole identity, location, orientation), survey tables (downhole directional measurements), interval tables (lithology, alteration, mineralisation), and point/sample tables (assay results, QAQC data). Every record is linked through foreign key relationships that prevent orphaned data. An assay result cannot exist without a corresponding collar, and a collar cannot be deleted while associated survey and interval data exist.

These systems provide immutable audit trails. Every data entry, edit, and deletion is logged with a timestamp and user identity. This is a requirement for JORC and NI 43-101 compliance audits, not merely good practice.

Platforms supporting Phase 2 maturity:

- acQuire GIM Suite — the industry-standard geological information management system, centralising drillhole, sample, and assay data with configurable QAQC workflows

- Seequent MX Deposit — cloud-native drillhole data management, enabling real-time collaboration between field geologists logging core at the drill site and office-based resource teams

- Datamine Fusion — integrated data management and geological modelling environment, reducing software transitions in the workflow

Cloud-native platforms eliminate the latency of CSV file transfers. A field geologist logging core at a remote drill site enters data into the production database, where it is immediately validated against defined QAQC rules and made available to the resource geologist running estimation workflows at the corporate office. No more emailing spreadsheets and waiting days for import.

One detail often missed: the desurveying problem. Different software packages use different algorithms (minimum curvature, raw tangent, balanced tangential) to reconstruct the 3D path of a drillhole from downhole survey measurements.

When a drillhole database exports spatial data to a modelling package, the desurvey algorithm applied can produce materially different coordinate positions for the same assay interval. In deep holes with deviation, this offset can reach several metres — enough to place an ore intercept on the wrong side of a fault plane. This is an algorithmic choice that must be standardised and documented across the entire data workflow.

Phase 3: Implicit Modelling and API-Integrated Ecosystems

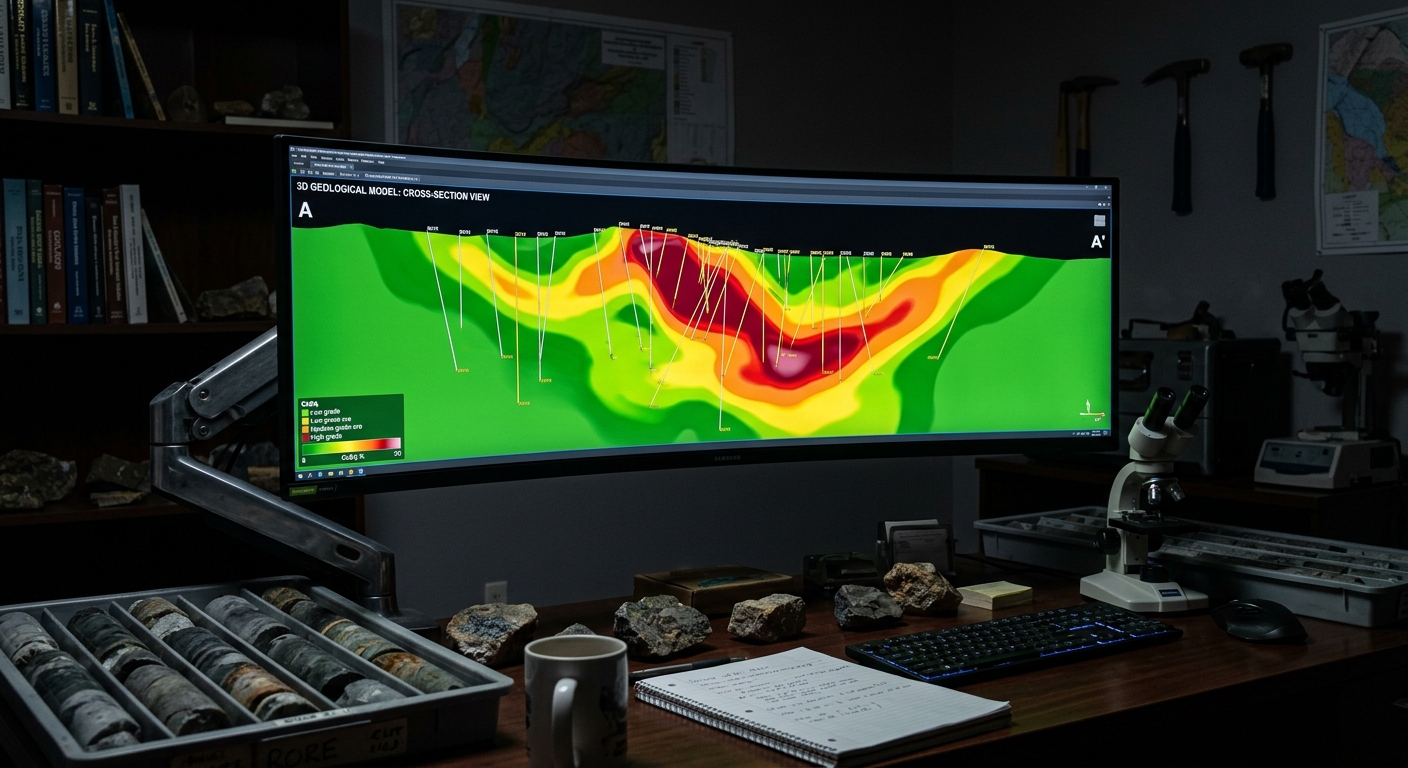

The evolution from explicit wireframing to implicit modelling represents a fundamental change in how geological surfaces and volumes are constructed from drillhole data. Instead of manually connecting intercepts point-by-point, implicit modelling uses mathematical functions (radial basis functions, distance functions, and interpolants) to generate 3D iso-surfaces from the database. The geological model is derived algorithmically from the data, rather than constructed manually from the geologist's interpretation.

The operational advantage is speed and repeatability. When new drillhole data is added to the database, the implicit model auto-updates without manual re-wiring of wireframes.

This transforms the geological model from a quarterly deliverable into a tool that incorporates new data weekly or daily. For operations with ongoing drill campaigns where the resource model must keep pace with new information, this responsiveness is operationally critical.

Platforms supporting Phase 3 maturity:

- Seequent Leapfrog Geo — pioneered commercial implicit geological modelling, providing rapid generation of dynamic 3D models from the drillhole database

- Maptek Vulcan — offers both implicit and explicit modelling within the same environment, allowing geologists to choose the appropriate methodology for different geological domains within a single deposit

- Micromine Origin — supports over 70 import formats, positioning itself as a bridge between disparate data sources

- K-MINE — integrated geological modelling, resource estimation, and mine planning platform

A critical neutrality caveat: implicit models can over-smooth complex geological boundaries. A mathematical interpolant does not "know" that a fault offsets the orebody by 15 metres unless a geologist explicitly constrains it with structural data.

Implicit modelling is not a replacement for geological judgement. It requires experienced geological intervention to constrain mathematical surfaces against known structural controls, contact relationships, and geological first principles.

The end goal is an API-integrated ecosystem that eliminates CSV file transfers entirely. The drillhole database pushes validated data via API to the modelling environment, the model updates, and the block model pushes to the mine planning suite.

This end-to-end data pipeline removes the entire class of transfer-induced data corruption: no more stripped leading zeros, no more date format mismatches, no more silent decimal truncation.

The Open Mining Format (OMF v2.0 beta, Rust-based) is an emerging open standard designed to bridge the data exchange gap between disparate geological and mining software ecosystems. The AMIRA P1208 interoperability project and Seequent's Evo platform (launched at PDAC 2025) represent industry-level efforts to establish standardised data exchange protocols.

Centralised version control systems — Seequent Central and Deswik.MDM — enable multi-user geological model management with branching, merging, and conflict resolution modelled on software engineering practices.

The vendor consolidation landscape provides context for long-term procurement decisions:

- Seequent (Leapfrog, MX Deposit, Central) — acquired by Bentley Systems for $1.05B

- GEOVIA (Surpac, Whittle) — owned by Dassault Systèmes, acquired for $360M

- Deswik — acquired by Sandvik

- Micromine — acquired by Weir Group

- acQuire — part of Constellation Software

- Maptek, maxgeo, Datamine — independently held

Ownership determines which products integrate natively. A modelling platform owned by the same parent company as a mine planning suite is more likely to develop native integration than platforms from competing ownership groups.

What This Means for Your Software Evaluation

The geological data architecture decision is not primarily a software feature comparison. It is a risk decision.

Consider your operation's position:

- Single-site operation with stable geology: If your deposit is well-understood, drilling campaigns are infrequent, and the resource model is mature, a Phase 2 centralised database with validated QAQC workflows and full audit trails provides the data integrity and compliance documentation you need. Implicit modelling may offer less value when the model changes quarterly rather than weekly.

- Multi-site portfolio with active drill campaigns: If you are managing geological data across multiple projects, incorporating new drillhole data weekly, and requiring rapid model updates for short-term planning, Phase 3 implicit modelling with API integration provides the agility and consistency required. The time saved on model updates compounds across multiple sites.

- Junior explorer with limited IT infrastructure: Phase 1 tools are a valid starting point for early-stage projects with small datasets. Building with migration to Phase 2 in mind (structured data templates, documented QAQC protocols, standardised naming conventions) prevents costly data remediation when the project advances to resource estimation and feasibility.

Diagnostic questions for your evaluation:

- Does your database enforce QAQC validation at the point of data entry, or are validation checks applied manually after import?

- Can your geological modelling software connect to the drillhole database via API, or does the workflow require CSV/Excel file exports?

- Does your entire data pipeline, from drillhole capture to block model, produce an immutable audit trail that satisfies JORC or NI 43-101 Competent Person requirements?

- Is the desurvey algorithm standardised across your database and modelling software, and is the choice documented?

- Can your modelling environment handle both implicit and explicit methods, allowing geologists to choose the appropriate approach for each geological domain?

- What is your organisation's data migration strategy? If you are moving from Phase 1 spreadsheets to a Phase 2 centralised database, has the legacy data been audited for structural errors that will propagate into the new system if imported uncleaned?

Frequently Asked Questions

Common questions on this topic, answered concisely.

- What is the difference between explicit and implicit geological modelling?

- Explicit modelling constructs 3D geological surfaces by manually connecting drillhole intercepts point-by-point, giving geologists direct interpretive control over every vertex — critical for complex deposits with sharp structural boundaries or fault offsets. Implicit modelling uses mathematical interpolants (typically radial basis functions) to generate geological volumes automatically from drillhole data, producing reproducible, auditable surfaces that update in minutes when new data arrives. Leapfrog Geo pioneered implicit modelling at commercial scale; Micromine, Maptek Vulcan, and GEOVIA Surpac now offer hybrid explicit/implicit workflows.

- Why do mining resource estimates fail QAQC audits?

- An AMEC (Association of Mineral Exploration Canada) audit found that only 4 of 26 resource projects had adequate QAQC protocols. Common failures include unvalidated assay duplicates, missing blank or standard reference samples, undocumented compositing rules, and drillhole database corruption (duplicate collar coordinates, missing intervals, assay rounding errors). The consequences are material: Rubicon Minerals constructed a $700 million mine on a resource estimate that was subsequently revised downward by 87%, from 3.3 million ounces to 413,000 ounces.

- What is the role of a drillhole database in mining?

- A drillhole database is the centralised, validated record of every hole drilled on a project — collar coordinates, downhole survey data, lithology, assay intervals, QAQC samples, and density measurements. Platforms like acQuire, Seequent Central, and MX Deposit enforce validation rules at the point of data entry, maintain immutable audit trails required for JORC and NI 43-101 compliance, and serve as the single source of truth that downstream modelling, planning, and reporting tools consume via read-only APIs.

- How does RBF interpolation improve resource modelling?

- Radial basis function (RBF) interpolation generates continuous geological surfaces from sparse drillhole data by solving a system of equations that honours every data point while producing a mathematically smooth volume. Unlike explicit wireframing, RBF surfaces are reproducible (same inputs always produce the same output), update automatically when new drillhole data arrives, and carry implicit uncertainty information that downstream block modelling can propagate into grade-tonnage and financial sensitivity analysis.

Related Categories

Explore the software categories referenced in this article.

Database Management

Phase 1/2: Centralised drillhole data with QAQC validation: relational databases, audit trails, and regulatory compliance.

Geology & Resource Modelling

Phase 2/3: Explicit and implicit 3D geological modelling: wireframing, RBF interpolation, and block model generation.

Mine Planning & Design

Downstream consumer: The block model feeds directly into pit optimisation, scheduling, and production planning.